WORKSHOPS

Workshop 1: Identifying and analysing evidence to determine whether tasks elicit the intended constructs: bridging the gap between modern validity theory and innovative validation practice.

Fully Booked

Stuart Shaw & Ezekiel Sweiry

Establishing that assessment tasks elicit performances that reflect the intended constructs is a fundamental component of assessment validation. However, literature on this question is dominated by theoretical perspectives, with little practical guidance for practitioners. In addition, recent developments in the assessment landscape, including the emergence of technological advances (e.g. process data) and novel forms of assessment (including 21st-century skills such as collaboration and self-reflection), mean that established guidance may not adequately address contemporary requirements.

Workshop 2: Exploring feedback dialogues for a transformative feedback culture.

Sam Passeport, Andrew Watts, Nathalie Younès, Constanze Höpfner & Marianne Talbot

This interactive pre-conference workshop invites participants to critically engage with formative assessment or Assessment for Learning (AfL) by focusing on the articulation and shared understanding of feedback. Research highlights feedback as a powerful intervention (Hattie, 2009; Hattie & Timperley, 2007), yet its impact varies. Some approaches view feedback as information transfer, while others see it as a process. Building on the latter, this session examines feedback dialogues through a socio-material lens, exploring how relationships, power dynamics, tools, technologies, and institutional structures shape feedback encounters.

Workshop 3: Your best friend the psychometrician: The preventive role of psychometrics in test development.

Marieke van Onna, Bas Hemker, Cor Sluijter

This workshop will help you to get a further insight in the advantages of timely involvement of a psychometrician when setting up a new testing program. It is useful for non-psychometricians to find out on how many more issues they can call on their friendly neighbourhood psychometrician. For psychometricians, the workshop may help to increase their added value.

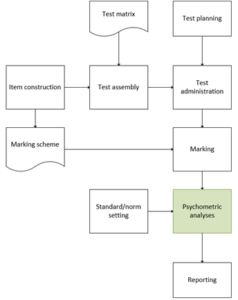

During the workshop, we will use a scheme of all activities involved in test development. We’ll discuss several general psychometric topics, and relate these to the decisions you will have to make for these activities. We’ll show in which way a psychometrician might contribute to each activity. In each block, we’ll give guidelines and illustrate best practices. We’ll invite you to share your experiences with the topics and ask us for advice.

No R, no formulas, still all psychometrics.

Workshop 4: From awareness to action: embedding inclusive assessment in teacher development programs in higher education.

Celine van der Lienden & Laurinde Koster.

In today’s diverse learning environments, inclusive assessment is essential to ensure fair and valid learning outcomes. Moreover, assessment should support higher education students in their learning process, enabling them to demonstrate their knowledge and skills. Inclusive assessment practices align with broader educational values such as contributing to a more accessible and equitable society.

Workshop 5: Establishing valid qualification equivalency with qualitative judgement.

Stuart Gallagher and Georgie Billings

Where statistical equating methods are not available, through lack of common items or common candidates, but equivalency between two qualifications is required, it can be difficult to provide robust evidence.

Session 1 of the workshop focuses on a novel standard setting methodology that allowed for IGCSE scores to be translated into Mississippi end-of-course performance levels and integrated into the state accountability system. The method draws on aspects of both Body of Work (BoW) and Bookmarking methods to create an operationally feasible process.

Section 2 explores why there is a need for qualification equivalency, how a qualification can be broken down into content, demand and awarding standards, and some possible methodologies for establishing standards equivalency when the data for psychometric equating is not available.

Workshop 6: Network Analysis for the investigation of Rater Effects (using R).

Iasonas Lamprianou

This workshop introduces the application of Network Analysis (NA) to rater-mediated assessments. NA analyzes rating datasets by considering pairwise comparisons between raters.

Participants will learn how to detect and interpret key rater behaviors, including severity/leniency, inconsistency (misfit), halo effects, bias, drift (changes over time), and the formation of rater sub-communities. A key feature of the workshop is the comparison of NA results with those from traditional approaches such as the Rasch model.

NA is a flexible method that can handle nominal, dichotomous, ordinal, and numeric data. Unlike traditional models that rely on strong assumptions (e.g., local independence or unidimensionality), NA operates with minimal requirements, making it especially suitable for complex or non-standard rating contexts. Visualizations further enhance interpretability.

The workshop emphasizes hands-on experience using open-source R code and real datasets from published studies. A brief theoretical overview will also be provided. Participants are encouraged to bring their laptops and follow along.

Identifying and analysing evidence to determine whether tasks elicit the intended constructs: bridging the gap between modern validity theory and innovative validation practice.

Stuart Shaw & Ezekiel Sweiry.

Establishing that assessment tasks elicit performances that reflect the intended constructs is a fundamental component of assessment validation. However, literature on this question is dominated by theoretical perspectives, with little practical guidance for practitioners. In addition, recent developments in the assessment landscape, including the emergence of technological advances (e.g. process data) and novel forms of assessment (including 21st-century skills such as collaboration and self-reflection), mean that established guidance may not adequately address contemporary requirements.

Through a combination of presentations, discussion and group activities, this workshop explores the challenges in identifying and analysing validation evidence to determine whether assessment tasks elicit performances that reflect the intended constructs. Participants will explore a range of validation evidence sources, from traditional statistical and qualitative approaches to innovative methods like process data and eye-tracking. Attendees will work through real-world scenarios, critically examining the alignment between task design and cognitive processes, and considering the implications of emerging technologies on validation practices.

Participants will leave with a deeper understanding of how to strengthen their validation arguments, navigate trade-offs in assessment design, and leverage both classic and emerging techniques to enhance validity in diverse educational contexts.

WORKSHOP TITLE: Identifying and analysing evidence to determine whether tasks elicit the intended constructs: bridging the gap between modern validity theory and innovative validation practice.

Presenter: Stuart Shaw and Ezekiel Sweiry

Presenters’ Bios (500 words max per presenter)

Stuart Shaw is an educational assessment consultant, researcher, and author, and is Honorary Professor of University College London in the Institute of Education – Curriculum, Pedagogy & Assessment. Before he became an independent consultant, he worked for international awarding organisations for over 20 years where he was particularly interested in demonstrating how educational, psychological, and vocational tests seek to meet the demands of validity, reliability, and fairness. He has a wide range of publications in English second language assessment and educational/psychological research journals (around 150 publications). He is Chair of the Board of Trustees of the Chartered Institute of Educational Assessors (CIEA) and a Fellow of the CIEA. Stuart is a Fellow of the Association for Educational Assessment in Europe (AEA-Europe), an elected member of the Council of AEA-Europe, and is Chair of its Scientific Programme Committee.

Ezekiel Sweiry is an Associate Director at Ofqual, a non-governmental department that regulates qualifications, examinations and assessments in England. He has over 25 years’ experience in assessment, with the majority of those years spent in paper-based and digital test development and item validity research. He has worked for the three largest UK awarding bodies, where he has been involved in the development of a range of high-stakes assessments and responsible for the training of examiners. His particular research interests include the factors that affect the difficulty and accessibility of test items, the item and mark scheme features that affect marking reliability, and the comparability of paper-based and digital assessments.

Why AEA members should attend this workshop:

Despite the primacy of validity as a theoretical concept and the increasing number of conceptual frameworks designed to guide validation effort, there is relatively little in the way of practical guidance for would-be validators: good validation studies still prove surprisingly challenging to conceptualise, let alone to implement (Lissitz, 2009). Accordingly, calls for a more pragmatic approach to validation abound (Kane, 2013; 2006).

One key validation research question, often given scant attention due to the challenge it presents, seeks to determine whether assessment tasks elicit the intended test constructs. Corresponding evidence garnered in support of such a claim of validity is often limited to marker reports or basic consideration of item level statistics. For example, candidate response process validation evidence[1] is relatively scare (Hubley & Zumbo, 2011; Zumbo & Chan, 2014) – though this situation is changing with the advent of increasingly more sophisticated sources of assessment evidence (Zumbo, 2017). This challenge has arguably resulted in a lack of practical guidance, both in terms of the nature of the post-assessment evidence that can be collected, and the ways in which it might be analysed.

As well as this lack of practical guidance, the question of determining whether assessment tasks elicit the intended test constructs is particularly pertinent for two further reasons. First, recent technological advances in assessment have made available a number of more novel evidence sources, such as candidate process data and eye tracking. These sources, derived from richly situated learning environments, have the potential to offer a more fully developed articulation of “assessment” and enhance the capacity to make more valid and accurate decisions about student learning and pedagogy.

Second, the question merits increased attention due to the emergence of more novel forms of assessment. 21st century skills (e.g. collaborative problem-solving, creativity, critical thinking, technology literacy and decision-making), “hard-to-measure” complex, interactive performances, classroom-based formative assessment, and computer-based testing is making the process of validation a more multi-faceted, complex endeavour.

This workshop takes as its focus a very practical orientation, exploring challenges in identifying and analysing validation evidence that seeks to address the validity question: Do the assessment tasks elicit performances that reflect the intended constructs? In doing so, the workshop attempts to bring various different validation techniques to life.

Who this workshop is for:

The responsibility for assessment providers to demonstrate robust and thorough validity evidence is a long-established expectation (Messick, 1992, p.89), as are warnings about the “potentially serious consequences” (Kane, 2009, p.61) of shirking such responsibilities. Even assessment providers and users of such assessments that have limited resources will still have a responsibility to demonstrate the quality and validity of their assessments. This workshop is intended to make the complexities of validation theory and practice less challenging and more readily operational. Accordingly, the workshop is relevant to many key actors in educational assessment. Test developers who specify the content and format of tasks; examination officials frequently working within statutory agencies or approved assessment providers/awarding bodies; policy makers – those charged with formulating, leading and funding assessment; and to researchers and postgraduate students with a wide variety of assessment interests will find this workshop of great relevance.

Overview of workshop (max. 600 words):

The workshop divides into four parts, each comprising sessions that afford group activity, reflection, and discussion.

Part 1 offers a brief introductory overview of assessment validation. Important questions are raised which will punctuate the workshop throughout, including:

- How is it possible to judge whether a qualification has necessary and sufficient validity? How much validity is sufficient validity?

- What is the relationship between validity and validation?

- What makes for a sound validity/validation argument?

- What is validation evidence and what do we mean by analysing validation evidence?

Part 2 offers an overview of sources of validation evidence, categorised into four broad types: Statistical, Qualitative, Documentary, and Innovative. Example approaches from each category will be presented, with a focus on how each evidence source can be analysed and interpreted to determine whether tasks elicit performances that reflect the intended constructs.

In Part 3, participants will be presented with several different scenarios based on four sources of innovative validation evidence:

- ‘Hard-to-measure’ skills (such as ‘collaboration’ and ‘self-reflection’), which refers to the personal, social, and emotional attributes, skills and dispositions that enhance cognitive abilities and academic performance.

- Impact (in English second language assessment), which refers to the consequential aspects of validity. Consequential validity focusses on three main areas: differential validity, washback and effects on society. In other words, the effects and consequences within the educational system and within society more widely.

- Process data, which refers to any data automatically collected about test takers’ response processes, such as response times and the actions performed by the test-taker (e.g. keystrokes, mouse clicks, selection, and navigation).

- Eye tracking data, which refers to the information gathered from tracking the test taker’s gaze as they interact with assessment material, and includes metrics such as fixation points (where the eyes stop to focus) and saccades (quick eye movements between fixations).

For each of the four scenarios, attendees will have the opportunity to analyse and interpret authentic assessment data. Working in groups, they will determine what can be concluded about the cognitive processes that are elicited, and the extent to which those processes align with the intentions of the assessment. Attendees will also have the opportunity to reflect on the potential and limitations of each type of evidence in relation to eliciting evidence of construct validity, as well as the barriers (e.g. costs, resources) to utilising each type of evidence.

Part 4 explores issues relating to:

- How empirical evidence and logical analysis are used to test the claims in a validation argument in order to evaluate its overall strength,

- How to identify the kinds of evidence and analysis that can be used either to falsify or to support a measurement argument claim,

- How we know that a qualification is measuring what it is supposed to be measuring, and

- How traditional (classic) and innovative techniques can be combined.

The discussion will broaden to encompass future directions of validation methodology including the use of digitised technologies such as Artificial Intelligence.

The workshop will conclude with discussion activities relating to fundamental validation concerns such as:

- why it is very hard to judge (a) what kind of validation evidence is necessary and (b) how much is sufficient;

- the inclusion of consequences of testing as a legitimate source of validation evidence or analysis;

- ways to optimise validity;

- inevitable assessment design trade-offs and compromises and their impact on validity;

- ‘validation-by-design’ versus ‘validation-of-design’ and, the relationship between validity and reliability, fairness and practicality.

Preparation for the workshop:

Useful (though not essential) preparatory reading:

Cook, D. A., & Hatala, R. (2016). Validation of educational assessments: a primer for simulation and beyond. Advances in Simulation, 1(31), DOI 10.1186/s41077-016-0033-y.

Crisp, V., & Shaw, S. (2012): Applying methods to evaluate construct validity in the context of A level assessment, Educational Studies, 38:2, 209-222 http://dx.doi.org/10.1080/03055698.2011.598670.

All texts will be provided for participants in advance of the workshop.

Proposed Schedule

|

Time |

Session |

Presenter |

|

09.00 |

ARRIVAL & WELCOME |

Stuart Shaw & Ezekiel Sweiry |

|

9.30 – 10.00 |

PART 1: INTRODUCTION § What is validation? § How is it possible to judge whether a qualification has necessary and sufficient validity? § To what degree is evidence and analysis consistent with the overarching validation (measurement) claim? |

Stuart Shaw

|

|

10.00 -10.45 |

PART 2: SOURCES OF VALidation EVIDENCE Overview of sources of evidence and analysis for validation research: § Statistical o Types of correlational analysis o Distractor analysis o Differential Item Functioning o Rasch modelling § Qualitative o Subject expert analysis and judgement o Analysis of candidate responses o Think aloud protocols § Documentary o Literature reviews o Syllabus and paper development documentation o Assessment evaluation reports § Innovative: o Cognitive laboratory experiments (e.g. cognitive interviewing, think-aloud protocols and vignettes, cognitive demand, behavioural observations, interviews) o Physiological (e.g. eye tracking) o Psycho-sensory o Process data (e.g. computer-generated log files) o Impact (with a focus on Critical Language Assessments) |

Stuart Shaw & Ezekiel Sweiry |

|

10.45 – 11.00 |

TEA/COFFEE BREAK |

|

|

11.00 – 11.45 |

Activity and reflection (1) Participants will consider in groups a number of realistic scenarios, with a view to reflecting on the following prompts: § Which sources of evidence would you use to stake a claim of validity with respect to whether the tasks elicit performances that reflect the intended constructs? § Why would you choose such these sources of evidence? § What other sources of evidence might you use? What constraints might impact on your choice? |

Stuart Shaw & Ezekiel Sweiry |

|

12.00 – 13.00 |

LUNCH |

|

|

13.00 – 14.05 |

Part 3: ExEMPLIFYING SOURCES OF VALidation EVIDENCE: EXAMPLES Participants, working with authentic data, will be presented with four scenarios based on the following innovative validation approaches: § Candidate ‘reflection’ and/or ‘collaboration’ scripts § Impact instrumentation § Eye tracking § Process data |

Stuart Shaw & Ezekiel Sweiry |

|

14.05 – 14.30 |

Activity and reflection (2) § What empirical evidence and logical analyses is required to stake a claim of validity for a qualification? § What are the relative merits and demerits of the practical examples explored? § Why and how would you employ these types of evidential sources in your own contexts? |

Stuart Shaw & Ezekiel Sweiry |

|

14.30 – 14.45 |

Tea/coffee break |

|

|

14.45 – 16.00 |

Part 4: Discussion Participants will be asked to reflect in groups on the workshop and in response to the semi-structured prompts, such as: § How can validation techniques be combined? § Looking to the future and AI |

Ezekiel Sweiry |

|

16.00 – 16.30 |

Activity and reflection (3) Issues for discussion will include: § What have we learned? § Who gets to judge sufficient validity, and through what due process? § How little validation evidence can we rely upon? Should we rely upon? Do we rely upon? |

Stuart Shaw |

References

Hubley, A. M., & Zumbo, B. D. (2011). Validity and the consequences of test interpretation and use. Social Indicators Research, 103(2), 219–230. https://doi.org/10.1007/s11205-011-9843-4.

Kane, M.T. (2013). Validating the interpretations and uses of test scores. Journal of Educational Measurement, 50 (1), 1-73.

Kane, M.T. (2009) ‘Validating the interpretations and uses of test scores’. In R.W. Lissitz (ed.), The Concept of Validity. Charlotte, NC: Information Age, 39-64.

Kane, M. T. (2006). Validation. In R. B. Brennan (Ed.), Educational Measurement (4th ed., pp. 17-64). Westport, CT: American Council on Education/Praeger.

Lissitz, R. W. (ed.). (2009). The Concept of Validity: Revisions, New Directions, and Applications. Charlotte, NC: Information Age Publishing.

Messick, S. 1992. Validity of test interpretation and use. In Encyclopedia of Educational Research (6th ed.), ed. M.C. Alkin. New York: Macmillan.

Zumbo, B. D., & Chan, E. K. H. (Eds.). (2014). Validity and Validation in Social, Behavioral, and Health Sciences. Springer International Publishing/Springer Nature. https://doi.org/10.1007/978-3-319-07794-9.

Exploring feedback dialogues for a transformative feedback culture.

Sam Passeport, Andrew Watts, Nathalie Younès, Constanze Höpfner & Marianne Talbot

This interactive pre-conference workshop invites participants to critically engage with formative assessment or Assessment for Learning (AfL) by focusing on the articulation and shared understanding of feedback. Research highlights feedback as a powerful intervention (Hattie, 2009; Hattie & Timperley, 2007), yet its impact varies. Some approaches view feedback as information transfer, while others see it as a process. Building on the latter, this session examines feedback dialogues through a socio-material lens, exploring how relationships, power dynamics, tools, technologies, and institutional structures shape feedback encounters.

Participants will engage with key research on feedback literacies, gaining insights into how feedback cultures are enacted and experienced by students and assessors. Through discussions and activities, they will reflect on their feedback experiences and explore strategies for fostering meaningful, dialogue-based interactions.

Designed for educational researchers and practitioners—including teachers, lecturers, and curriculum coordinators in secondary and higher education—this session requires no prior expertise, just curiosity and an interest in student agency and dialogic feedback.

By the end, participants will have a deeper understanding of formative feedback as a relational, situated practice and leave with practical strategies to cultivate sustainable, dialogue-rich assessment cultures.

APPENDIX A: Template pre-conference workshop

WORKSHOP TITLE: Exploring feedback dialogues for a transformative feedback culture

Presenters:

- Sam Passeport (she/her)

- Andrew Watts (he/him)

- Nathalie Younès (she/her)

- Constanze Höpfner (she/her)

- Marianne Talbot (she/her)

Presenters’ Bios (500 words max per presenter):

Sam Passeport

Sam works as an Instructional Designer at Tilburg University in the Netherlands. She is also a Professional Doctoral candidate with the University of Dundee in Scotland. She investigates the (missed) feedback encounter between undergraduate students’ and their teachers from a critical theory point of view and using interpretative phenomenological analysis. With over 13 years of experience in international education (PK-12 and higher education), Sam promotes a relational and critical approach to coaching and dialogic feedback, creating space for connections and true dialogues between students and teachers. Sam is also the Chair of the Assessment Cultures SIG at AEA-Europe since December 2024.

Andrew Watts

Dr. Andrew Watts began his career as a teacher of English in secondary schools in the UK. After eleven years he moved to Singapore where he taught in a Junior College for over four years. He then worked for five years in the Ministry of Education in Singapore, focusing on curriculum development and in-service teacher development. In 1990 he returned to England and worked with Cambridge Assessment from 1992 to 2008. From 2004 he set up and ran the Cambridge Assessment Network, which provides professional development opportunities for assessment professionals internationally. Since leaving Cambridge Assessment he has worked on numerous assessment projects on a freelance basis. In 2019 he completed work for a PhD on the history of examinations in England, based at the University of Cambridge Faculty of Education. He has made various contributions to AEA-Europe conferences including running pre-conference workshops. He was one of the initiators of the Assessment Cultures Special Interest Group within the Association and continues to serve on the Steering Group for that SIG.

Nathalie Younès

Nathalie Younès is a professor of education sciences at the University of Clermont-Auvergne (UCA) in France. Her research focuses on assessment for learning and ecological assessment in higher education. She directs the ACTe laboratory’s theme: “Design and Evaluation of Tools and Systems” and a research programme on higher education. She designs and delivers the teacher training programme for teachers and pedagogical advisors at UCA. She has led several collaborative research projects with primary and secondary school teachers and higher education pedagogical advisors to develop assessment for and as learning practices and identify the conditions for their effective development. Between 2016 and 2021, she was president of ADMEE-Europe, a French-speaking network of researchers, evaluators, trainers and teachers who question the methodology of evaluation in education systems.

Constanze Höpfner (she/her)

Constanze works as an instructional designer and junior assessment specialist at the Faculty of Humanities and Digital Sciences of Tilburg University. She collaborates with a wide range of stakeholders such as lecturers, education policy staff, and the university’s Network for Educational Development and Innovation. As part of a Dutch national initiative (‘Smarter Academic Year’), she works towards stimulating assessment practices that reduce pressure on students and lecturers, promote critical thinking, and better integrate assessment and curriculum design.

Marianne Talbot (she/her)

Marianne is a post-transfer PhD researcher at the University of Leeds School of Education. Her area of interest is the impact of professional development in educational assessment on assessors, including qualified teachers, university lecturers, and those assessing in professional workplace environments. Her doctoral research focuses on the personal assessment practice, feedback landscape, and unique assessment ecologies of Chartered Educational Assessors who are also secondary school teachers in England. She is researching their influence as educational assessment experts on the practice of others around them, their individual perceptions of assessment expertise, their confidence to deploy that expertise, and their assessment agency and identity. Her experience is in qualifications, curriculum, and assessment development and evaluation, project management including impact and risk assessments, independent evaluation of outreach and widening participation activities, and course leadership for the Chartered Institute of Educational Assessors, based at the University of Hertfordshire. More information is available on LinkedIn and in her researcher profile at the University of Leeds.

Why AEA-E members / conference delegates should attend this workshop:

AEA members/conference delegates should join this workshop to: (1) explore assessment feedback cultures, (2) learn from the latest empirical and theoretical research insights regarding formative assessment, Assessment for Learning, and feedback, and (3) co-create practical strategies for implementation in their own contexts.

- Explore Assessment Feedback Cultures: Feedback or Formative Assessment is more than information being transferred from teachers to students. Nowadays, formative feedback cultures aim to center dialogic processes, for instance, through novel assessment models like Programmatic Assessment (van der Vleuten et al., 2012) and using a variety of tools (rubrics, coversheets, AI) and processes (self-assessment, peer learning, coaching).

- Research Insights on Feedback: Much research has been carried out in the past few decades, shifting research on feedback from a focus on teachers and their actions, and then students’ self-regulation and feedback recipiency, to finally arrive at the importance of a partnership and shared responsibility between students and teachers through feedback literacy/ies.

- Co-Create and Take Away Practical Strategies: Participants will contribute insights, practices, and reflections from their own contexts and engage in collaborative exercises and solution design. Through interactive activities and a co-creation session (experimenting with feedback tools, prototyping and sharing ideas for implementation on a digital wall), attendees will leave with tangible strategies and resources to implement in their institutions.

Who this Workshop is for:

This workshop is designed for educational researchers and practitioners, including teachers, lecturers, and programme or curriculum coordinators in secondary schools and higher education (vocational and university settings). It is ideal for those interested in exploring dialogic, relational, and socio-material approaches to feedback to enhance assessment practices in their institutions.

Overview of workshop (500-600 words):

This PCW will examine the critical role of feedback dialogues in shaping assessment cultures. While feedback is often framed as a simple transmission of information between teachers and students, a learning-centered approach emphasizes its emotional, relational, and situated nature (Winstone & Carless, 2019). Feedback is not merely a cognitive process but first and foremost a socially situated practice influenced by human interactions, institutional structures, and material (Gravett, 2022), which participants will dive into.

Process

The workshop consists of three sessions following the Design Thinking process, culminating with a co-creation session that will employ bricolage (Kincheloe & Berry, 2004) as a reflective (Schön, 1983) and hands-on inquiry approach to help participants translate insights into actionable strategies. This approach has been recognized as an effective professional learning practice (Campbell, 2018).

Alignment with the Conference Theme

Formative assessment is regarded as one of the most effective interventions for enhancing student learning (Black & Wiliam, 1998; Hattie, 2009), however, research highlights several barriers in feedback engagement, as reported by Winstone et al. (2017), such as students’ lacking the strategic skills for feedback uptake as well as course design problem.

Recent research emphasizes the need to design feedback for uptake (Winstone & Carless, 2019) and to foster a shared responsibility for the feedback process (Nash & Winstone, 2017; Winstone, Pitt & Nash, 2020). This workshop will address these complexities, promoting feedback dialogues that enhance inclusive assessment practices (Tai et al., 2022). In doing so, it touches upon design elements, as well as themes relating to equity and inclusion.

Workshop Structure

- Session 1: Feedback Cultures & Dialogues (Empathize & Define)

The workshop begins with an exploration of feedback cultures through listening to an audio material capturing students’ voice, enabling participants to empathize with students’ experiences. They will examine feedback cultures through different contextual layers (Bronfenbrenner, 1979) and the question of psycho-socio-technical mediations to be considered in a culture of emancipatory evaluation (Younès, 2020, 2025). By reflecting on their own experiences as feedback recipients and providers, participants will explore feedback dialogues, recognizing that student agency and engagement are shaped by both social interactions and material conditions. Using research on feedback literacies as a socio-material practice (Gravett, 2022) and mini-case studies, they will critically analyze missed feedback opportunities and the role of relational pedagogies (Biesta & Tedder, 2007).

- Session 2: Bricolage Session (1/2) – Ideate

In this co-creative session, participants will reflect on their own contexts, examining how socio-material factors (e.g. relationships, emotions, identity, power, norms, time, space, tools, and structures) shape feedback encounters. Engaging in dialogic feedback processes such as peer coaching, they will collaboratively explore strategies for enhancing feedback interactions and fostering relational trust.

- Session 3: Bricolage Session (2/2) – Prototype & Test

Participants will engage in a group activity to examine tools and co-create actionable plans for transforming feedback cultures. Through structured reflection and collaborative exercises, they will adapt feedback practices to their institutional contexts. For example, administrators who lead programs will be able to create intentional structures to support teachers and lecturers in more intentional processes for feedback engagement and uptake, and teachers/lecturers will be able to use (modifiable) tools and strategies in the classroom, to promote students’ engagement with feedback. The session will conclude with the creation of tangible takeaways, including a collective digital wall (e.g. Padlet), fostering a sense of community and commitment to implementing feedback dialogues.

By the end of the workshop, participants will have developed a deeper understanding of feedback as a relational, situated practice. They will leave with practical strategies to cultivate sustainable, dialogue-rich feedback cultures in their assessment contexts.

Preparation for the workshop:

Tentative Schedule

|

Time |

Session |

Presenter |

|

9.30-12.00 (Inc. a 15 min break 10:30-11:00) |

Block I Feedback Cultures & Dialogues [Empathise and Define] |

Sam Passeport, Marianne Talbot, Nathalie Younès |

|

12.00-13.00 |

Lunch |

|

|

13.00-14.30 |

Block II ‘Bricolage’ Session (1/2) [Ideate & Protoype] |

Sam Passeport, Andrew Watts |

|

14.30-14.45 |

Tea/coffee break |

|

|

14.45-16.30 |

Block III ‘Bricolage’ Session (2/2) [Protoype and Test] |

Constanze Höpfner, Sam Passeport |

References

- Black, P. and Wiliam, D. (1998) ‘Inside the black box: Raising standards through classroom assessment.’, Phi Deltan Kappan, 80(2), pp. 139-148.

- Bronfenbrenner, U. (1979) The ecology of human development. Harvard: Harvard University Press.

- Campbell, L. (2018) ‘Pedagogical bricolage and teacher agency: Towards a culture of creative professionalism’, Educational Philosophy and Theory, 51(1), pp. 31-40.

- Gravett, K. (2022) ‘Feedback literacies as sociomaterial practice’, Critical Studies in Education, 63(2), pp. 261-274.

- Hattie, J. (2009) Visible Learning: A Synthesis of Over 800 Meta-Analyses Relating to Achievement.: Routledge.

- Hattie, J. and Timperley, H. (2007) ‘The Power of Feedback’, Review of Educational Research, 77(1), pp. 81-112.

- Kincheloe, J. L. and Berry, K. S. (2004) Rigour and complexity in educational research: conceptualising the bricolage. Maidenhead, Berkshire: Open University Press.

- Schön, D. (1983) The Reflective Practitioner: How Professionals Think in Action. London: Temple Smith.

- van der Vleuten, C. P., Schuwirth, L. W., Driessen, E. W., Dijkstra, J., Tigelaar, D., Baartman, L. K. and van Tartwijk, J. (2012) ‘A model for programmatic assessment fit for purpose’, Med Teach, 34(3), pp. 205-14.

- Winstone, N. and Carless, D. (2019) Designing Effective Feedback Processes in Higher Education: A Learning-Focused Approach. Routledge.

- Younès, N. (2025). Vers une évaluation émancipatrice dans un écosystème formatif. Dans J.F. Marcel et Gremion, C. (Dir.), Évancipation dans l’institution. Oser le rapprochement entre émancipation et évaluation (p. 169-183). Editions Cépaduès.

- Younès, N. (2020). Vers une évaluation écologique dans l’enseignement supérieur : dispositifs et transformations en jeu. [Note de synthèse pour l’habilitation à diriger des recherches]. Nancy, Université de Lorraine. France. tel-03032417v1.

Your best friend the psychometrician: The preventive role of psychometrics in test development.

Marieke van Onna, Bas Hemker, Cor Sluijter

This workshop will help you to get a further insight in the advantages of timely involvement of a psychometrician when setting up a new testing program. It is useful for non-psychometricians to find out on how many more issues they can call on their friendly neighbourhood psychometrician. For psychometricians, the workshop may help to increase their added value.

During the workshop, we will use a scheme of all activities involved in test development. We’ll discuss several general psychometric topics, and relate these to the decisions you will have to make for these activities. We’ll show in which way a psychometrician might contribute to each activity. In each block, we’ll give guidelines and illustrate best practices. We’ll invite you to share your experiences with the topics and ask us for advice.

No R, no formulas, still all psychometrics.

APPENDIX A: Template pre-conference workshop

WORKSHOP TITLE:

Your best friend the psychometrician: The preventive role of psychometrics in test development

Presenters:

Marieke van Onna, Bas Hemker, Cor Sluijter

Presenters’ Bios (500 words max per presenter):

Marieke, Bas and Cor

- Studied psychology and wrote a Ph.D. thesis on a psychometric topic

- Have at least 20 years of experience at Cito, where they work or worked at the psychometric department CitoLab

- Are fellows at AEA-Europe

- Have given numerous lectures, classes, and workshops in the Netherlands, as well in many other countries on five continents.

- Are involved in wide variety of educational measurement projects, that require a combination of statistics, psychometrical skills and knowledge of educational practice.

- Love working with motivated colleagues on improving educational assessments.

Marieke

Wrote her thesis on Bayesian estimation of latent class models and nonparametric IRT

- Worked a few years as an assistant professor for statistics and methodology in psychology before joining Cito

- Coordinates all psychometric analyses that are necessary for the national exams in Dutch secondary education

- Apart from that, works on some smaller testing programs

Bas

- Has been the head of the psychometric department of Cito

- Is involved in CitoLab International, Cito’s international department

- Works at Cito, specialized as an educational measurement researcher, with quality of school exams as one of his projects

- Is a member of the Dutch Committee on Test Matters (COTAN)

- Was a member for many years of the Professional Development Committee of AEA Europe

Cor

- Also a former head of psychometric department of Cito

- Works as an independent consultant on test quality and test use

- Is a lecturer in educational measurement at Fontys University of Applied Sciences

- Is external member of several Exam Boards of various universities in the Netherlands

- Is the Vice-President of AEA-Europe since November 2024

Why AEA-E members / conference delegates should attend this workshop:

As Ronald Fisher said: To consult the statistician after an experiment is finished is often merely to ask him to conduct a post mortem examination. He can perhaps say what the experiment died of.

Read ‘psychometrician’ for ‘statistician’, and ‘educational test’ for ‘experiment’.

Psychometricians like to work in prevention!

This workshop will help you to get a further insight in the advantages of timely involvement of a psychometrician when setting up a new testing program. It is useful for non-psychometricians to find out on how many more issues they can call on their friendly neighbourhood psychometrician. For psychometricians, the workshop may help to increase their added value.

No R, no formulas, still all psychometrics.

Who this Workshop is for:

Anybody involved in setting up (new) exams, tests and testing programs: managers, project leaders, psychometric leads, test developers, practitioners in the field of educational testing. No mathematical pre-knowledge necessary.

(Max. 25 participants.)

Overview of workshop (500-600 words):

Psychometricians are often asked to ‘run the analyses’. However, they can only present you sensible tables as output, if they know which meaning will be attached to the results. Who are the stakeholders, and what is the type of message, you want to convey to each of these stakeholders? Therefore, psychometricians will ask you ‘what is the purpose of your test?’, shortly followed by ‘what is the format of your test report, for each stakeholder?’, as the full meaning of the test will only be in the use of the reports by the stakeholders.

In order to run their analyses, psychometricians need data, attributes, classifications, higher-level information and, most importantly, information on decisions. All decisions you make during the development of a testing program, have an influence on the quality of the data and the options for reporting. E.g. you can’t report on subdomains if your test matrix does not include a balanced representation of them: your psychometrician will start complaining about too little score points and large measurement errors.

If you have a preventative discussion with a psychometrician when setting up a testing program, they’ll point out the implications of decisions, and allow you to make an informed decision. They can also help with improving the quality of the data, because they know: “Garbage in, garbage out”.

During the workshop, we will use the figure above as a scheme of all activities involved in test development. We’ll discuss several general psychometric topics, and relate these to the decisions you will have to make for these activities. We’ll show in which way a psychometrician might contribute to each activity.

After an introduction to the test development scheme, we’ll discuss the topics below. In each block, we’ll give guidelines and illustrate best practices. We’ll invite you to share your experiences with the topics and ask us for advice.

- Validity and the desired coherence between report purpose, test matrix and tasks, by means of the evidence-centered design model and constructive alignment

- Different kinds of standard setting and the implied reporting options. Standards may be relative or absolute, and standard setting procedures may be test-, item-based, population- or person-based.

- Threats to validity in the case of missing values or differential item functioning. Several reasons why candidates do not answer questions are discussed. Subgroups of candidates may differ in general ability level. Differential item functioning, however, refers to deviating scores on a single question.

- Reliability, and the effect of rater agreement. We’ll distinguish between global and local reliability, and the relation with the purpose of the test. In the case of questions that cannot be marked automatically, rater agreement might be an issue. A psychometrician can advise on the amount of raters that are needed, or detect items that need better marking schemes.

- When (not) to use adaptive testing. We’ll discuss the pros and cons of adaptive testing. We’ll include the difference between full adaptive testing and multi-stage testing. In addition we’ll discuss automated test assembly (ATA), which may also be useful in non-adaptive settings.

We’ll finish with a list of what annoys psychometricians most (e.g. lacking item version administration).

Preparation for the workshop:

Please think about the following questions beforehand:

- What assessment program are you working on, or planning to work on?

- Which problems do you experience in your program?

- Which part of the test cycle is malfunctioning, or missing, in your program?

- What do you think is/might be the contribution of a psychometrician for your program?

Tentative Schedule

|

Time |

Session |

Presenter |

|

9.30- 12.00 (Inc. a 15 min break 10:30-11:00) |

Block I: Introduction to test cycle Validity (ECD, Constructive alignment) Reporting (standard setting)

|

Marieke, Bas, Cor |

|

12.00- 13.00 |

Lunch |

|

|

13.00- 14.30 |

Block II: Reliability and rater agreement

|

Marieke, Bas, Cor |

|

14.30- 14.45 |

Tea/coffee break |

|

|

14.45- 16.30 |

Block III: Automated test assembly

|

Marieke, Bas, Cor |

From awareness to action: embedding inclusive assessment in teacher development programs in higher education.

Celine van der Lienden & Laurinde Koster.

In today’s diverse learning environments, inclusive assessment is essential to ensure fair and valid learning outcomes. Moreover, assessment should support higher education students in their learning process, enabling them to demonstrate their knowledge and skills. Inclusive assessment practices align with broader educational values such as contributing to a more accessible and equitable society.

To achieve such inclusive assessment practices, it is crucial that educators are supported in developing the necessary awareness, knowledge, and skills. Teacher development programs play a key role in this process. In the Netherlands, structured development trajectories are used to build assessment literacy in among university teaching staff, with inclusive assessment increasingly integrated as a central theme.

This workshop focuses on how inclusive assessment can be meaningfully integrated into teacher development programs in higher education. Led by an educational measurement expert and an inclusive education specialist from Risbo (Erasmus University Rotterdam), we take an evidence-based approach by translating research into practical strategies for program and course design, assessment construction, and grading. Through interactive activities and peer dialogue, participants will explore how inclusive assessment can be implemented in their own context, with concrete tools that contribute to fairer, more valid assessment practices and improved student learning outcomes.

APPENDIX A: Template pre-conference workshop

WORKSHOP TITLE: From awareness to action: embedding inclusive assessment in teacher development programs in higher education Presenters: Celine van der Lienden, Msc. (Risbo, Erasmus University Rotterdam) Laurinde Koster, MA. (Risbo, Erasmus University Rotterdam)

Presenters’ Bios (500 words max per presenter):

Celine van der Lienden: Celine van der Lienden is an education advisor at Risbo, Erasmus University Rotterdam, where she specialises in assessment innovation, curriculum development and teacher training in higher and secondary education. Her focus is on feedback design, programmatic assessment, and the integration of emerging technologies such as AI in assessment practices. A key part of her work involves developing and facilitating professional development programs for academic teachers, with a particular focus on strengthening assessment literacy. With a strong focus on the learning function of assessment, she helps institutions rethink traditional approaches and adopt more holistic and student-centred strategies. Her role often includes guiding lecturers through complex design questions, translating educational theory into practical, context-sensitive solutions. Celine holds a Master’s in educational science from Radboud University and Educational Measurement from Fontys University of Applied Sciences. She holds a bachelor’s degree in Pedagogical Sciences from Erasmus University Rotterdam, which forms the foundation of her educational thinking. Combining theoretical expertise with practical experience, Celine is committed to strengthening assessment as a tool for student learning. She brings a thoughtful, analytical perspective to complex educational challenges and works closely with educators to help shape assessment practices that are meaningful, future-oriented, and pedagogically sound.

Laurinde Koster: Laurinde Koster (MA) is an educational consultant and trainer with experience in higher education, committed to fostering inclusive and innovative teaching practices. Since 2017, she has worked at Risbo, Erasmus University Rotterdam, where she advises faculties, lecturers, and external partners on course design, blended and hybrid learning, and educational innovation. As a trainer, she develops and delivers professional development programs, including University Teaching Qualification (UTQ) and Basic Examiner Qualification (BEQ) trajectories, PhD training programs, and teacher workshops. Her work is rooted in a strong belief in inclusive education, with a focus on supporting educators in creating learning environments that embrace diversity, accessibility and inclusion. She has guided teaching teams in developing inclusive curricula and assessment practices that recognize and value diversity as assets in the classroom. With an international academic background—including a Master’s in Multilingualism (University of Groningen), a Master’s in English and Education (University of Copenhagen), and a bachelor’s in education with TESOL—Laurinde combines theoretical insight with hands-on experience. She brings a practical and empathetic approach to her work, characterized by creativity and a deep commitment to educational equity and sense of belonging.

Why AEA-E members / conference delegates should attend this workshop:

By connecting theory to practice, this session provides concrete tools and frameworks that participants can apply in their own institutions. Participants will engage with peers across contexts, share challenges and solutions, and leave with actionable insights and strategies to enhance fairness and quality in assessment design and practice. The workshop will have a focus on professionalising educators through structured development trajectories.

Who this Workshop is for:

All interested parties: researchers, practitioners, higher education teachers, policy makers, educational advisors.

Overview of workshop (500-600 words):

In an increasingly diverse higher education landscape, inclusive assessment has become a key element for promoting fairness, improving validity, and supporting all students in demonstrating their knowledge and skills. Inclusive assessment aims to ensure that students, regardless of background or ability, can demonstrate their learning fairly. However, “historically, assessment has struggled to meet the needs of student diversity in higher education” (McArthur, 2016; Nieminen, 2022). Moving beyond accommodations, inclusive assessment advocates for proactive design. As emphasized, “assessment should be designed to be inclusive for all students in the first place – not retrospectively” (Hanafin et al., 2007; Nieminen, 2022). This approach aligns with the principles discussed in Assessment for Inclusion in Higher Education (Ajjawi et al., 2022), which highlight the need for equity-driven, responsive practices in assessment design. Therefore, achieving inclusive assessment practices requires more than policy—it requires an investment in teacher professionalization to enable them to design inclusive assessment practices. Educators need structured opportunities to build their assessment literacy, develop inclusive mindsets, and acquire practical strategies to apply in their daily practice. This workshop explores how inclusive assessment can be integrated as a central theme in teacher development programs in higher education. Drawing on experience with professionalization trajectories in the Netherlands, we discuss how such programs can raise awareness and create meaningful impact on learning and assessment quality. The workshop is led by an educational measurement expert and an inclusive education specialist from Risbo (Erasmus University Rotterdam).

Structure of the workshop

The workshop starts with a short introduction to the key principles of inclusive assessment and its importance in higher education. Drawing on current research and international frameworks such as Universal Design for Learning (UDL), we reflect on how inclusive assessment contributes not only to fairness and validity, but also to learning outcomes. Participants will be invited to reflect on their own context and share current practices and challenges. In the second part, the focus is on the design of teacher development programs at Risbo. Using examples from our work in the Dutch higher education context. Participants will analyze examples of professionalization interventions and frameworks, and discuss what elements could be adapted or applied in their own institutional or national context. The final part of the workshop is hands-on. Participants will engage with practical tools and resources designed to support inclusive assessment in course design, exam construction, and grading. Through peer dialogue and group discussion, they will reflect on how these tools can be integrated into professional development programs and how to create impact within their own institutions.

Outcomes and Takeaways

By the end of the workshop, participants will: • Understand the value of inclusive assessment for fairness, validity, and student learning • Be familiar with strategies for embedding inclusive assessment into teacher development programs • Gain practical tools to support educators in developing inclusive assessment practices • Exchange ideas and approaches with peers working in various international contexts This workshop is relevant for researchers, practitioners, higher education teachers, assessment specialists, policy makers, and education advisors involved in the professionalization of educators and the improvement of assessment in higher education.

References

Ajjawi, R., Tai, J., Boud, D., & Jorre de St Jorre, T. (Eds.). (2022). Assessment for inclusion in higher education: Promoting equity and social justice in assessment. Routledge. https://doi.org/10.4324/9781003293101 Hanafin, J., Shevlin, M., Kenny, M., & McNeela, E. (2007). Including young people with disabilities: Assessment challenges in higher education. Higher Education, 54(4), 435–448. https://doi.org/10.1007/s10734-006-9005-9SpringerLink+1ResearchGate+1 McArthur, J. (2016). Assessment for social justice: The role of assessment in achieving social justice. Assessment & Evaluation in Higher Education, 41(7), 967–981. https://doi.org/10.1080/02602938.2015.1053429journal.aldinhe.ac.uk+2East Texas A&M University, ETAMU+2ResearchGate+2 Nieminen, J. H. (2022). Assessment for inclusion: Rethinking inclusive assessment in higher education. Teaching in Higher Education, 27(8), 1038–1052. https://doi.org/10.1080/13562517.2021.2021395Taylor & Francis Online+4East Texas A&M University, ETAMU+4journal.aldinhe.ac.uk+4 Preparation for the workshop: No preparation required. Tentative Schedule Time Session Block I Presenter 9.30- 12.00 (Inc. a 15 min break 10:30-11:00) Celine van der Lienden and Laurinde Koster 12.00- 13.00 Lunch 13.00- 14.30 Block II Celine van der Lienden and Laurinde Koster 14.30- 14.45 Tea/coffee break 14.45- 16.30 Block III Celine van der Lienden and Laurinde Koster.

Establishing valid qualification equivalency with qualitative judgement.

Stuart Gallagher and Georgie Billings

Where statistical equating methods are not available, through lack of common items or common candidates, but equivalency between two qualifications is required, it can be difficult to provide robust evidence.

Session 1 of the workshop focuses on a novel standard setting methodology that allowed for IGCSE scores to be translated into Mississippi end-of-course performance levels and integrated into the state accountability system. The method draws on aspects of both Body of Work (BoW) and Bookmarking methods to create an operationally feasible process.

Section 2 explores why there is a need for qualification equivalency, how a qualification can be broken down into content, demand and awarding standards, and some possible methodologies for establishing standards equivalency when the data for psychometric equating is not available.

The practical activity will involve delegates becoming comfortable with using the CRAS framework to evaluate the demand of questions, and how to set up, run and evaluate a comparative study using the No More Marking platform. We will discuss the benefits and limitations of this methodology for establishing demand equivalency, and what follow up work can usefully done with the results.

APPENDIX A: Template pre-conference workshop

WORKSHOP TITLE:

Establishing valid qualification equivalency with qualitative judgement

Presenters:

Stuart Gallagher and Georgie Billings

Presenters’ Bios (500 words max per presenter):

Stuart Gallagher:

Stuart Gallagher is a Principal Assessment Advisor in the International Education group at Cambridge University Press & Assessment. His responsibilities include advising on assessment issues for the US market, evidencing and developing the technical quality of large-scale, high-stakes international assessments, and innovating practice around reporting, analysing and articulating candidate performance and awarding standards.

He has wide experience of UK assessment, having worked in Research and Technical Standards and as a Chair of Examiners for OCR. This includes assessment design and development, general qualification reform, awarding standards in vocational and technical qualifications, and major process change for key operational activities.

Before moving into educational assessment, Stuart taught French and Spanish, having gained a Postgraduate Certificate in Education and Qualified Teacher Status at the Faculty of Education in Cambridge. As a secondary school MFL practitioner and head of department, his key interests were innovating and improving curriculum design and delivery, developing use of data for reporting and analysis, and introducing immersion-style teaching of modern languages.

Stuart holds a BA in French and Linguistics from the University of York and an MEd in Researching Practice from the University of Cambridge.

Georgie Billings

Georgie Billings is Head of Assessment Quality within the Assessment Reform Group at Cambridge University Press & Assessment. The team enacts assessment reform projects for ministries, school groups and NGOs globally. Her responsibilities include heading up the quality assurance and research programme for accredited qualifications, advising on standard setting across a vast range of tests, including Africa, Asia and the Middle East, and heading up reform projects in contexts such as Cox’s Bazar refugee camps.

Georgie has a wealth of prior experience, including teaching, working for the educational UK charity The Prince’s Trust and working for the Department for Education.

Georgie holds a BAHons in English, PGCSE (Cantab) in Secondary English, MEd(Cantab) in Researching Practice, and holds RITTech accreditation as a data analyst. She has completed post-graduate studies in Creative Writing and Forensic Chemistry, and is currently finishing her MSc in International Development at University of Edinburgh.

Why AEA-E members / conference delegates should attend this workshop:

Where statistical equating methods are not available, through lack of common items or common candidates, but equivalency between two qualifications is required, it can be difficult to provide robust evidence. This workshop explores some methodologies for doing this, based on real experiences in the field, and will provide practical tools that can be applied to other qualifications.

Who this Workshop is for:

Anyone interested in establishing comparability or equivalency in the demand or awarding standard of qualifications through non-psychometric methodologies, particularly across different international contexts.

Overview of workshop (500-600 words):

Part 1: In 2022, the US Department of Education approved Cambridge IGCSE assessments in English Language, Mathematics and Biology to be administered in place of the corresponding high school end-of-course tests within the Mississippi state testing program, known as the MAAP. Cambridge IGCSE and the MAAP represent the different educational testing traditions in the UK and the US: Cambridge IGCSE assessments include a significant number of constructed response and multi-part items and have a strong focus on evaluating candidate-generated evidence, while MAAP end-of-course tests are grounded in psychometric tradition and make considerable use of field-tested banks of selected-response items.

This section of the workshop focuses on a novel standard setting methodology that allows for IGCSE scores in these subjects to be translated into Mississippi end-of-course performance levels and integrated into the state accountability system. The method draws on aspects of both Body of Work (BoW) – a holistic standard-setting method often used when an assessment contains many open-ended or constructed response items[1] – and Bookmark methods to create an operationally feasible process.

Delegates will learn about how this blended approach was designed and operationalised, including the statistical methodology used to develop ordered item booklets for constructed response items, and consider the challenges at each stage. They will have the opportunity to compare and contrast the BoW and Bookmark aspects of the process side-by-side, and explore the contributions made by these activities to the overall validity of the outcome.

Part 2: Cambridge International accredits a variety of qualifications globally which are owned and administered by other awarding organisations, resulting in co-certification and a statement of equivalency with a Cambridge qualification.

The session will explore why there is a need for qualification equivalency, how a qualification can be broken down into content, demand and awarding standards, and some possible methodologies for establishing standards equivalency when the data for psychometric equating is not available.

The practical activity will involve delegates becoming comfortable with using the CRAS framework[2] to evaluate the demand of questions, and how to set up, run and evaluate a comparative study using the No More Marking platform. We will discuss the benefits and limitations of this methodology for establishing demand equivalency, and what follow up work can usefully be done with the results.

Preparation for the workshop:

While no explicit knowledge or prior reading is required, delegates will need to have a laptop with the ability to connect to WiFi at the venue. They may also wish to bring two question papers which they are interested in comparing the demand of.

Tentative Schedule

|

Time |

Session |

Presenter |

|

9.30- 12.00 (Inc. a 15 min break 10:30-11:00) |

Block I Blending Bookmark and Body of Work standard setting methods: a practical solution to linking grading scales across testing traditions. This will include discussion of the problem statement and the development of the methodology. Delegates are then invited to undertake a critique of the method, exploring the impact with real data. |

Stuart Gallagher |

|

12.00- 13.00 |

Lunch |

|

|

13.00- 14.30 |

Block II Discussion of the need for comparability Exploring the concepts of content, demand and awarding standards Development of qualitative methods to establish equivalency |

Georgie Billings |

|

14.30- 14.45 |

Tea/coffee break |

|

|

14.45- 16.30 |

Block III Introducing the CRAS framework Practical task setting up a demand standard comparative task in No More Marking and analysing the results. |

Georgie Billings |

[1] Wyse, A. E., Bunch, M.B., Deville, C., and Viger, S. G. (2014). A Body of Work Standard-Setting Method With Construct Maps. Educational and Psychological Measurement, 74(2) pp.236–262

[2] Johnson, M. and Mehta,S. (2011). Evaluating the CRAS Framework: Development and recommendations. Research Matters(12) pp. 27-32

Network Analysis for the investigation of Rater Effects (using R).

Iasonas Lamprianou.

This workshop introduces the application of Network Analysis (NA) to rater-mediated assessments. NA analyzes rating datasets by considering pairwise comparisons between raters.

Participants will learn how to detect and interpret key rater behaviors, including severity/leniency, inconsistency (misfit), halo effects, bias, drift (changes over time), and the formation of rater sub-communities. A key feature of the workshop is the comparison of NA results with those from traditional approaches such as the Rasch model.

NA is a flexible method that can handle nominal, dichotomous, ordinal, and numeric data. Unlike traditional models that rely on strong assumptions (e.g., local independence or unidimensionality), NA operates with minimal requirements, making it especially suitable for complex or non-standard rating contexts. Visualizations further enhance interpretability.

The workshop emphasizes hands-on experience using open-source R code and real datasets from published studies. A brief theoretical overview will also be provided. Participants are encouraged to bring their laptops and follow along.

Reading list:

Lamprianou (2018) in Educational and Psychological Measurement, 78(3), 430–459.

Lamprianou (2023) in Sociological Methods and Research, 55(1), 525–553.

Lamprianou (2025, in press) in Research Methods in Applied Linguistics.

Lamprianou et al. (2023) in Assessing Writing, 56, 100713.

APPENDIX A: Template pre-conference workshop

WORKSHOP TITLE:

Network Analysis for the investigation of rater effects

Presenters:

Iasonas Lamprianou, University of Cyprus

Presenters’ Bios (500 words max per presenter):

Iasonas Lamprianou is an Associate Professor of Quantitative Methods at the Department of Social and Political Sciences, University of Cyprus. His methodological interests include rater and coding effects, Rasch models, and appropriateness measurement (person-fit).

His recent books include ‘A Step-by-Step Guide to Applying the Rasch Model Using R: A Manual for the Social Sciences’ (2024, 2nd Edition) and ‘Network Analysis for Rating Datasets in R: A Multi-Disciplinary Perspective’ (2025, in press), both by Routledge.

Iasonas has published in journals such as Educational and Psychological Measurement, Sociological Methods and Research, International Journal of Social Research Methodology, Journal of Educational Measurement, Research Methods in Applied Linguistics, Language Testing, Assessing Writing, Assessment in Education, International Journal of Testing, and others.

Why AEA-E members / conference delegates should attend this workshop:

Participants will develop data analysis skills using open-source, cutting-edge tools that can be used either as stand-alone approaches or in complement to existing methods (e.g., Rasch models).

Who this Workshop is for:

Researchers, academics, students, and practitioners who want to enhance their empirical analysis toolbox, particularly in rater designs and rater effects within the broader field of social sciences.

Overview of workshop (500-600 words):

This workshop introduces the application of Network Analysis (NA) to rater-mediated assessments. NA analyzes rating datasets by considering pairwise comparisons between raters.

Participants will learn how to detect and interpret key rater behaviors, including severity/leniency, inconsistency (misfit), halo effects, bias, drift (changes over time), and the formation of rater sub-communities. A key feature of the workshop is the comparison of NA results with those from traditional approaches such as Rasch measurement.

NA is a flexible method that can handle nominal, dichotomous, ordinal, and numeric data. Unlike traditional models that rely on strong assumptions (e.g., local independence or unidimensionality), NA operates with minimal requirements, making it especially suitable for complex or non-standard rating contexts. Visualizations further enhance interpretability.

The workshop emphasizes hands-on experience using open-source R code and real datasets from published studies. A brief theoretical overview will also be provided. Participants are encouraged to bring their laptops and follow along.

Reading List:

Lamprianou, I. (2018). Investigation of rater effects using Social Network Analysis and Exponential Random Graph Models. Educational and Psychological Measurement, 78(3), 430-459.

Lamprianou, I. (2023). Measuring and visualizing coders’ reliability: New approaches and guidelines from experimental data. Sociological Methods and Research, 55 (1), 525-553.

Lamprianou (2025, in press). Network Analysis for the investigation of rater effects: a comparison of ChatGPT vs human raters. Research Methods in Applied Linguistics.

Lamprianou, I., Tsagari, D., & Kyriakou, N. (2023). Experienced but detached from reality: Theorizing and operationalizing the relationship between experience and rater effects. Assessing Writing, 56, 100713.

Preparation for the workshop:

Laptop with R, RStudio. More information will be provided.

Tentative Schedule

|

Time |

Session |

Presenter |

|

9.30- 12.00 (Inc. a 15 min break 10:30-11:00) |

Block I: · Theory of Rater Effects · Introduction to Network Analysis · Severity/Leniency |

Iasonas Lamprianou |

|

12.00- 13.00 |

Lunch |

|

|

13.00- 14.30 |

Block II · Consistency / Inconsistency · Halo Effects |

Iasonas Lamprianou |

|

14.30- 14.45 |

Tea/coffee break |

|

|

14.45- 16.30 |

Block III · Differential Functioning (drift) · Detection of sub-communities |

Iasonas Lamprianou |